April 16, 2026

·PostHow Startups Can Beat Big AI Labs

Anthropic and OpenAI kill lots of startups. Don't be one of them.

So, you want to build an AI startup? I’ve got some bad news for you. Big AI labs like Anthropic and OpenAI are releasing features that could replace your entire product. While it still may be possible to outwork and outmaneuver big tech companies, with unlimited inference budgets and early access to next-generation models, these days small companies can really struggle to keep up. But we have identifies a couple wedges that you can use to position yourself in the market.

Death by Bundle

The worst thing that can happen is for your product to get rolled up into their subscription bundles. Anthropic and OpenAI both have $20 per month, $100 per month, and $200 per month subscriptions that offer subsidized AI inference pricing. Everyone wants to use these subscriptions because their value cannot be beat. If your product tries to use these subscriptions on behalf of users, not only does this make your business model difficult—but your application can be banned from using the subscription plan by the big labs. Just look at what Anthropic did to OpenClaw.

So your goal, as an AI founder, is to do everything you can to reduce that chance that your product gets rolled into their bundles.

Compete on Price

You can never price under an AI lab. It’s just not possible. You have to buy inference from them—and they charge a markup. Even if they were selling at-cost, they also have raised much more capital than you and can provide bigger subsidies to their users.

Instead of pricing low, you can win by pricing high.

Your product should cost at least $500 per month per seat. Or ideally, $5000 per month per seat. The higher your price, the more defensible your product will be.

But with this high price comes a high responsibility to deliver. You can’t price a simple app at $5000 per month. You need to have relatively low margins on that revenue. With a $5000 per month subscription, you should aim for inference costs to be at least $2500. If your inference costs are $4000, that is even more defensible… but you need to balance defensibility with business viability—and low margins make building a profitable business very difficult.

Your ultimate goal is to build a product that allows people to command the productive of as many tokens as possible.

Doing this works because it wrecks the economics of the big labs’ bundles. You can vibecode an app for less than $200 in tokens. Therefore, a Claude Code subscription can cost $200 and most people won’t use that much—even though some power users utilize more than that. Overall, this makes the subscriptions profitable for the labs.

Let’s say that Claude Code enables a user to vibecode an app for $150 in tokens. You want your product to be able to build a better version of that same app by increasing inference usage to $1500. The inference cost is 10x greater, but the app itself doesn’t need to be 10x better. It can be 2x better, or even just 20% better.

There will be a big market for this. Yes, it will price out budget users—but many serious enterprises will gladly pay more for better results. The fact is that artificial intelligence is a huge arbitrage on human intelligence. Even without the utmost cost efficiency, AI is still much cheaper than humans doing the work. Therefore, scaling AI usage to produce a better result is worth paying more for despite the fact that it has diminishing returns.

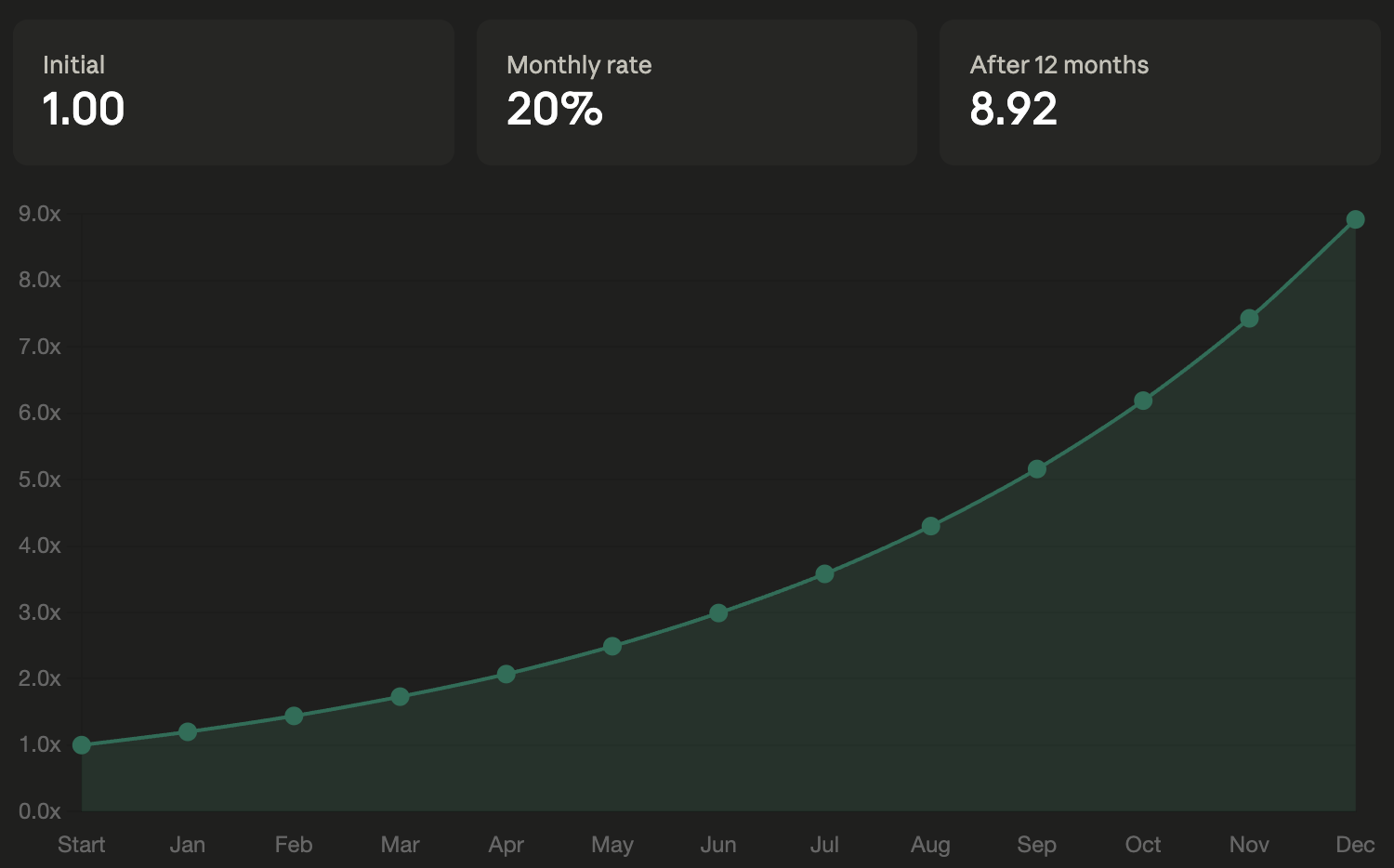

Further, the cost and value scale with different curves. Cost increases linearly, you pay a fixed amount more each month. If your plan is priced at $3000, compared to the $200 plan, that is $2800 extra in cost per month. So in January the company pays $2800 more, in February it increases to $5600… and by December the total reaches $33600. This is just a fraction of an engineer’s salary.

But a 20% better result compounds. Productive work builds on past work and technical debt grows exponentially. In January the product is 20% better, in February it is 44% better… and in December it is 892% better.

The big AI labs will certainly add higher price tiers at some point. But there is a limit to what they can do. The demand for better AI tools is there—as was just demonstrated—but subsidizing them in the bundle cannot scale. As the labs push up the limits of the subsidized plans, they in turn cannibalize their inference revenue—pushing more customers to use their subsidized plans rather than their APIs. The problem is that they have only a limited number of GPUs and, if people were using their APIs, they could simply raise the cost per token of inference to meet increased demand. But since the customers are on the subsidized plan, they need to instead lower the limits without lowering the price, thereby reducing the subsidy—and ultimately eliminating their advantage over you.

Turn Their Product Into a Feature

Oh how the tables have turned. Normally, big AI labs are the ones turn entire startups’ products into features on their platforms. But you can actually do the same to them.

Frontier models themselves have competition. Claude Opus/Mythos, GPT Pro, Codex, Gemini, perhaps the next version of Grok all have their respective strengths. There is no single model that dominates in all areas.

This creates an opportunity to build a superior product that delivers superior results by combining frontier models from different labs.

This could mean building a workflow that uses Claude for some takes and Codex for others. It could also mean using an LLM council to improve the quality of responses.

The more that you can improve the capabilities of your product by leaning on multiple models, the bigger your moat will be. Note: remember if this increases your inference costs, that’s actually good, too.

This is something that the big labs simply can’t do. It is very unlikely they will launch a product that relies substantially on a model outside of their own respective ecosystems. And if they do, the usage certainly won’t be significantly subsidized.

The world of multi-model apps is on a fundamentally even playing field.

Do What They Can’t

The above ideas are a strong starting place for a startup looking to build a moat in this hyper-competitive industry. But remember, you are not only competing with the big labs—but also other startups and open source projects. If you want to see our playbook for these other forms of competition, let us know!

The overarching theme is that you want to lean into things that big labs cannot. They cannot rely on other labs’ models and have limits on how much they can scale their subsidies. Therefore, you want to build products at higher price points that massively increase token usage and also rely on multiple models from different labs.

You can also consider doing other things that they can’t do.

One big, but hard, opportunity is around human-based operations. They are not in that business and are unlikely to enter it given their existing scale. Creating tools that provide specialized humans-in-the-loop for certain tasks could be a substantive moat.

Another one would be to cater to vice industries such as memecoins, gambling, and adult services. If you do this, remember that you are at the mercy of their Terms of Service, and probably should opt for open source models.

Subscribe to Modular Cloud

Get our latest posts delivered to your inbox.